In the coming weeks, Instagram will start notifying parents using supervision if their teen repeatedly tries to search for terms related to suicide or self-harm within a short period of time. This is the latest protection for Teen Accounts and Instagram’s parental supervision features.

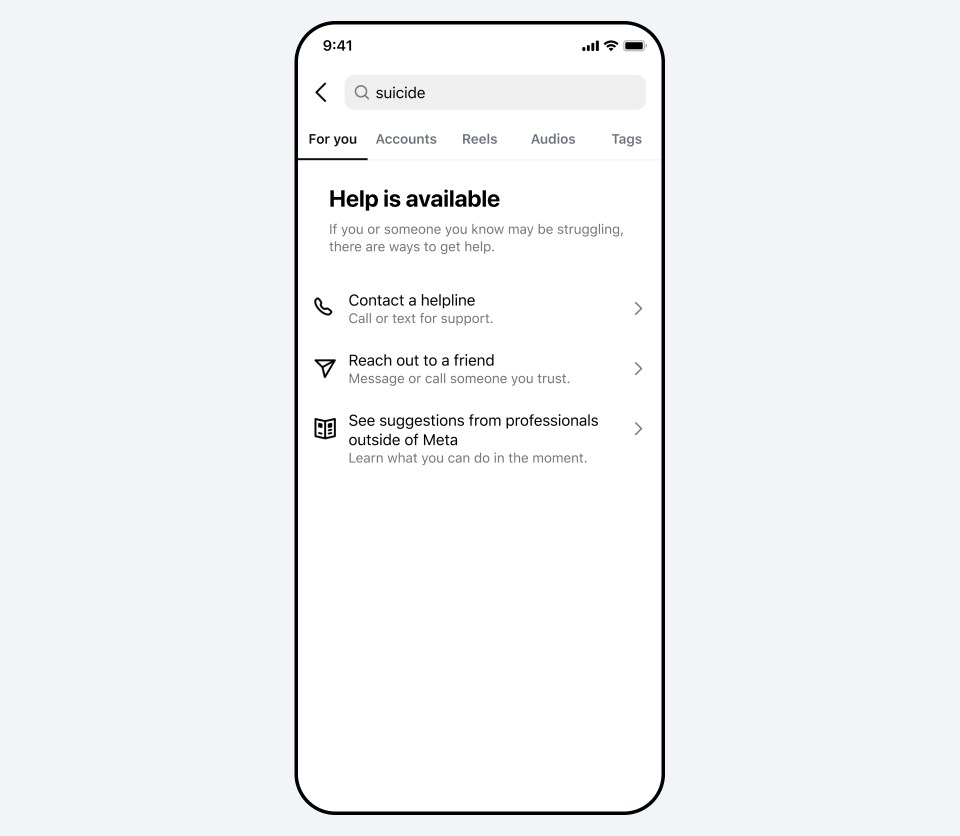

We understand how sensitive these issues are, and how distressing it could be for a parent to receive an alert like this. The vast majority of teens do not try to search for suicide and self-harm content on Instagram, and when they do, our policy is to block these searches, instead directing them to resources and helplines that can offer support. These alerts are designed to make sure parents are aware if their teen is repeatedly trying to search for this content, and to give them the resources they need to support their teen.

How the Alerts Will Work

Next week, parents and teens enrolled in supervision will be notified that Instagram will start sending these new alerts to parents, based on their teens’ search activity. Attempted searches that would prompt the alert include phrases promoting suicide or self-harm, phrases that suggest a teen wants to harm themselves, and terms like ‘suicide’ or ‘self-harm’.

The alerts will be sent to parents via email, text, or WhatsApp, depending on the contact information available, as well as through an in-app notification. Tapping on the notification will open a full-screen message explaining that their teen has repeatedly tried to search Instagram for terms associated with suicide or self-harm within a short period of time. Parents will also have the option to view expert resources designed to help them approach potentially sensitive conversations with their teen.

These alerts will roll out to parents who use Instagram’s parental supervision tools in the US, UK, Australia, and Canada next week, and will become available in other regions later this year.

Striking the Right Balance

Our goal is to empower parents to step in if their teen’s searches suggest they may need support. We also want to avoid sending these notifications unnecessarily, which, if done too much, could make the notifications less useful overall.

“When a young person searches about suicide or self-harm, empowering a parent to step in can be extremely important. The fact that Meta has now built this in is a meaningful step forward and is the kind of change that child safety experts have been pushing for.”

– Dr Sameer Hinduja, Co-Director of the Cyberbullying Research Center

In working to strike this important balance, we analyzed Instagram search behavior and consulted with experts from our Suicide and Self-Harm Advisory Group. We chose a threshold that requires a few searches within a short period of time, while still erring on the side of caution. While that means we may sometimes notify parents when there may not be real cause for concern, we feel — and experts agree — that this is the right starting point, and we’ll continue to monitor and listen to feedback to make sure we’re in the right place.

“It’s vital that parents have the information they need to support their teens. This is a really important step that should help give parents greater peace of mind – if their teen is actively trying to look for this type of harmful content on Instagram, they’ll know about it.”

– Vicki Shotbolt, CEO Parent Zone

Building on Existing Protections

These alerts build on our existing work to help protect teens from potentially harmful content on Instagram. We have strict policies against content that promotes or glorifies suicide or self-harm and, while we do allow people to share content about their own struggles with these issues, we hide this content from teens, even if it’s shared by someone they follow.

We work to block searches for terms clearly associated with suicide and self-harm, including terms that violate our suicide and self-harm policies. This means we don’t show any results and instead direct people to resources and local organizations that can help. We also direct people to resources and helplines when their searches aren’t clearly related to suicide and self-harm, but mental health more broadly. We’ll continue to alert the emergency services when we become aware of anyone at imminent risk of physical harm — actions that have saved lives.

We’re launching these alerts on Instagram search first, but we know teens are increasingly turning to AI for support. While our AI is already trained to respond safely to teens and provide resources on these topics as appropriate, we’re now building similar parental alerts for certain AI experiences. These will notify parents if a teen attempts to engage in certain types of conversations related to suicide or self-harm with our AI. This is important work and we’ll have more to share in the coming months.